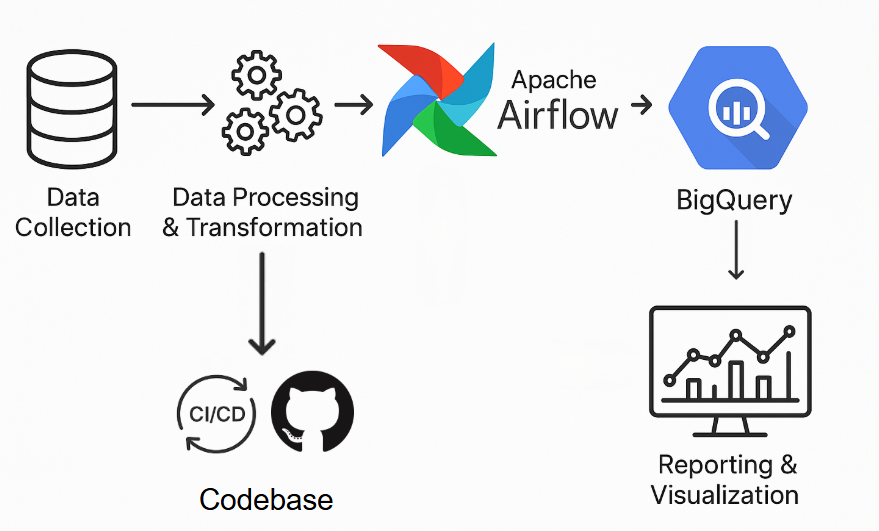

Data Pipelines and Orchestration

Data collection

- APIs (Google Classroom, Illuminate, and other instructional platforms)

- SFTP transfers from SIS and district systems

- File-based feeds when direct integrations are not yet available

Data processing & transformation

Raw feeds are cleaned, standardized, and modeled into durable tables. Validation checks reduce errors and ensure consistency over time.

Orchestration with Apache Airflow

Airflow coordinates each step in the workflow: ingestion, transformation, quality checks, and publishing. Schedules are transparent, runs are monitored, and stakeholders are notified automatically when something requires attention.

Continuous integration & delivery

Before changes go live, our CI/CD process runs automated tests on pipeline code and data outputs. Failing checks prevent deployment, protecting the accuracy of the metrics your teams rely on.

Storage & analysis in BigQuery

Processed data is stored in Google BigQuery for fast, scalable querying. Data from multiple sources is modeled together so you can answer cross‑system questions with a single source of truth.

Reporting & visualization

Analysts and leaders connect to curated datasets using Looker Studio, Tableau, Power BI, or Google Sheets. Interactive dashboards and reports make it easy to explore trends and answer day‑to‑day questions.